Peter Hedman

- Senior Staff Research Scientist, Meta (London) [firstname].j.[lastname]@gmail.com Twitter Bluesky Scholar

I’m a researcher at Meta in London, where I’m building immersive 3D experiences.

I’ve worked on 3D photos (1, 2, 3), 3D videos, and NeRF flythroughs for Google Maps. Formally my research interests are view synthesis, image-based rendering, neural rendering and real-time graphics.

PUBLICATIONS

|

Free-Range Gaussians: Non-Grid-Aligned Generative 3D Gaussian Reconstruction (free-range-gaussians.github.io) Ahan Shabanov, Peter Hedman, Ethan Weber, Zhengqin Li, Denis Rozumny, Gael Le Lan, Naina Dhingra, Lei Luo, Andrea Vedaldi, Christian Richardt, Andrea Tagliasacchi, Bo Zhu, Numair Khan arXiv 2026 |

|

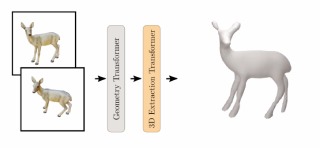

Fus3D: Decoding Consolidated 3D Geometry from Feed-forward Geometry Transformer Latents (lorafib.github.io/fus3d) Laura Fink, Linus Franke, George Kopanas, Marc Stamminger, Peter Hedman arXiv 2026 |

|

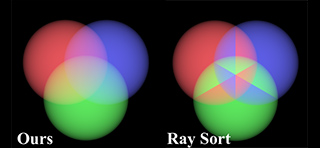

EVER: Exact Volumetric Ellipsoid Rendering for Real-time View Synthesis (half-potato.gitlab.io/posts/ever) Alexander Mai, Peter Hedman, George Kopanas, Dor Verbin, David Futschik, Qiangeng Xu, Falko Kuester, Jonathan T. Barron, Yinda Zhang ICCV 2025 (Oral) |

|

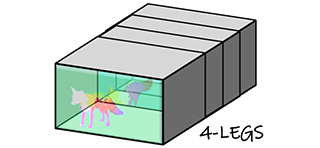

4-LEGS: 4D Language Embedded Gaussian Splatting (tau-vailab.github.io/4-LEGS) Gal Fiebelman, Tamir Cohen, Ayellet Morgenstern, Peter Hedman, Hadar Averbuch-Elor Eurographics 2025 |

|

NeRF-Casting: Improved View-Dependent Appearance with Consistent Reflections (nerf-casting.github.io) Dor Verbin, Pratul Srinivasan, Peter Hedman, Ben Mildenhall, Benjamin Attal, Richard Szeliski, Jonathan T. Barron SIGGRAPH Asia 2024 |

|

Flash Cache: Reducing Bias in Radiance Cache Based Inverse Rendering (benattal.github.io/flash-cache) Benjamin Attal, Dor Verbin, Ben Mildenhall, Peter Hedman, Jonathan T. Barron, Matt O'Toole, Pratul Srinivasan ECCV 2024 (Oral) |

|

Binary Opacity Grids: Capturing Fine Geometric Detail for Mesh-Based View Synthesis (creiser.github.io/binary_opacity_grid) Christian Reiser, Stephan Garbin, Pratul Srinivasan, Dor Verbin, Richard Szeliski, Ben Mildenhall, Jonathan T. Barron, Peter Hedman*, Andreas Geiger* SIGGRAPH 2024 |

|

SMERF: Streamable Memory Efficient Radiance Fields for Real-Time Large-Scene Exploration (smerf-3d.github.io) Daniel Duckworth*, Peter Hedman*, Christian Reiser, Peter Zhizhin, Jean-François Thibert, Mario Lučić, Richard Szeliski, Jonathan T. Barron SIGGRAPH 2024 (Honorable Mention) |

|

InterNeRF: Scaling Radiance Fields via Parameter Interpolation (arxiv.org/abs/2406.11737) Clinton Wang, Peter Hedman, Polina Golland, Jonathan T. Barron, Daniel Duckworth CVPR Neural Rendering Intelligence 2024 |

|

Eclipse: Disambiguating Illumination and Materials using Unintended Shadows (dorverbin.github.io/eclipse) Dor Verbin, Ben Mildenhall, Peter Hedman, Jonathan T. Barron, Todd Zickler, Pratul Srinivasan CVPR 2024 (Oral) |

|

Inpaint3D: 3D Scene Content Generation using 2D Inpainting Diffusion (inpaint3d.github.io) Kira Prabhu*, Jane Wu*, Lynn Tsai*, Peter Hedman, Dan B Goldman, Ben Poole, Michael Broxton arXiv 2023 |

|

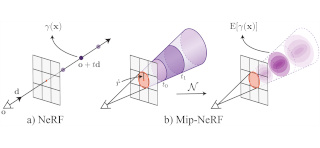

Zip-NeRF: Anti-Aliased Grid-Based Neural Radiance Fields (jonbarron.info/zipnerf) Jonathan T. Barron, Ben Mildenhall, Dor Verbin, Pratul Srinivasan, Peter Hedman ICCV 2023 (Oral, Best Paper Finalist) |

|

Vox-E: Text-guided Voxel Editing of 3D Objects (tau-vailab.github.io/Vox-E) Etai Sella, Gal Fiebelman, Peter Hedman, Hadar Averbuch-Elor ICCV 2023 |

|

MERF: Memory-Efficient Radiance Fields for Real-time View Synthesis in Unbounded Scenes (creiser.github.io/merf) Christian Reiser, Richard Szeliski, Dor Verbin, Pratul Srinivasan, Ben Mildenhall, Andreas Geiger, Jonathan T. Barron, Peter Hedman SIGGRAPH 2023 |

|

BakedSDF: Meshing Neural SDFs for Real-Time View Synthesis (bakedsdf.github.io) Lior Yariv*, Peter Hedman*, Christian Reiser, Dor Verbin, Pratul Srinivasan, Richard Szeliski, Jonathan T. Barron, Ben Mildenhall SIGGRAPH 2023 |

|

MobileNeRF: Exploiting the Polygon Rasterization Pipeline for Efficient Neural Field Rendering on Mobile Architectures (mobile-nerf.github.io) Zhiqin Chen, Thomas Funkhouser, Peter Hedman, Andrea Tagliasacchi CVPR 2023 (Best Paper Award Candidate) |

|

AligNeRF: High-Fidelity Neural Radiance Fields via Alignment-Aware Training (yifanjiang19.github.io/alignerf) Yifan Jiang, Peter Hedman, Ben Mildenhall, Dejia Xu, Jonathan T. Barron, Zhangyang Wang, Tianfan Xue CVPR 2023 |

|

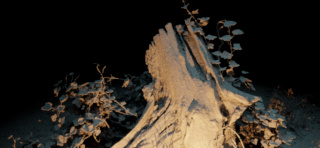

NeRF in the Dark: High Dynamic Range View Synthesis from Noisy Raw Images (bmild.github.io/rawnerf) Ben Mildenhall, Peter Hedman, Ricardo Martin-Brualla, Pratul Srinivasan, Jonathan T. Barron CVPR 2022 (Oral) |

|

Mip-NeRF 360: Unbounded Anti-Aliased Neural Radiance Fields (jonbarron.info/mipnerf360) Jonathan T. Barron, Ben Mildenhall, Dor Verbin, Pratul P. Srinivasan and Peter Hedman CVPR 2022 (Oral) |

|

Ref-NeRF: Structured View-Dependent Appearance for Neural Radiance Fields (dorverbin.github.io/refnerf) Dor Verbin, Peter Hedman, Ben Mildenhall, Todd Zickler, Jonathan T. Barron and Pratul P. Srinivasan CVPR 2022 (Oral, Best Student Paper Honorable Mention), TPAMI preprint |

|

HyperNeRF: A Higher-Dimensional Representation for Topologically Varying Neural Radiance Fields (hypernerf.github.io) Keunhong Park, Utkarsh Sinha, Peter Hedman, Jonathan T. Barron, Sofien Bouaziz, Dan B. Goldman, Ricardo Martin-Brualla and Steven M. Seitz SIGGRAPH Asia 2021 |

|

Baking Neural Radiance Fields for Real-Time View Synthesis (nerf.live) Peter Hedman, Pratul P. Srinivasan, Ben Mildenhall, Jonathan T. Barron and Paul Debevec ICCV 2021 (Oral), TPAMI 2025 |

|

Mip-NeRF: A Multiscale Representation for Anti-Aliasing Neural Radiance Fields (jonbarron.info/mipnerf) Jonathan T. Barron, Ben Mildenhall, Matthew Tancik, Peter Hedman, Ricardo Martin-Brualla and Pratul P. Srinivasan ICCV 2021 (Oral, Best Paper Honorable Mention) |

|

Immersive Light Field Video with a Layered Mesh Representation (augmentedperception.github.io/deepviewvideo) Michael Broxton, John Flynn, Ryan Overbeck, Daniel Erickson, Peter Hedman, Matthew DuVall, Jason Dourgarian, Jay Busch, Matt Whalen and Paul Debevec SIGGRAPH 2020 |

|

Image-Based Rendering of Cars using Semantic Labels and Approximate Reflection Flow (repo-sam.inria.fr/fungraph/ibr-cars-semantic) Simon Rodriguez, Siddhant Prakash, Peter Hedman and George Drettakis i3D 2020 |

|

Deep Blending for Free-Viewpoint Image-Based Rendering (visual.cs.ucl.ac.uk/pubs/deepblending) Peter Hedman, Julien Philip, True Price, Jan-Michael Frahm, George Drettakis and Gabriel Brostow SIGGRAPH Asia 2018 |

|

Instant 3D Photography (visual.cs.ucl.ac.uk/pubs/instant3d) Peter Hedman and Johannes Kopf SIGGRAPH 2018 |

|

Casual 3D Photography (visual.cs.ucl.ac.uk/pubs/casual3d) Peter Hedman, Suhib Alsisan, Richard Szeliski and Johannes Kopf SIGGRAPH Asia 2017 |

|

Scalable Inside-Out Image-Based Rendering (visual.cs.ucl.ac.uk/pubs/insideout) Peter Hedman, Tobias Ritschel, George Drettakis and Gabriel Brostow SIGGRAPH Asia 2016 |

|

Sequential Monte Carlo Instant Radiosity (visual.cs.ucl.ac.uk/pubs/smcir) Peter Hedman, Tero Karras and Jaakko Lehtinen i3D 2016, TVCG 2017 |

|

Multi-view Reconstruction of Highly Specular Surfaces in Uncontrolled Environments (visual.cs.ucl.ac.uk/pubs/shapefromreflections) Clément Godard*, Peter Hedman*, Wenbin Li and Gabriel J. Brostow 3DV 2015 (Oral) *Joint first authors. |

AWARDS, TALKS AND PUBLICITY

| June 2026 | CVPR workshop (ScanNet++) talk: "You Can't Wear a World Model: Generating 3D for AR/VR". |

| Dec 2025 | CVMP keynote talk: "The Last Mile of Research for Production-Ready View-Synthesis". |

| May 2025 | Elected as a Eurographics Junior Fellow. |

| July 2024 | SIGGRAPH Honorable Mention Award for SMERF. |

| June 2024 | CVPR workshop (XRNeRF) talk: "Transcoding for Fast View Synthesis". |

| May 2023 | i3D keynote talk: "Scaling NeRF Up and Down". |

| June 2022 | CVPR Best Student Paper Honorable Mention Award for Ref-NeRF. |

| May 2022 | Our work on NeRF flythroughs for Google Maps presented by Alphabet CEO Sundar Pichai at Google I/O (8:48). |

| Oct 2021 | ICCV Best Paper Honorable Mention Award for Mip-NeRF. |

| Aug 2020 | SIGGRAPH Immersive Pavilion BEST IN SHOW Award for Immersive Light Field Video with a Layered Mesh Representation. |

| Aug 2019 | SIGGRAPH Course (Capture4VR) talk: 3D Photography. |

| Jun 2018 | Instant 3D Photography highlighted at the Facebook F8 Conference keynote (1:08:45). |

| Apr 2017 | Casual 3D Photography presented by Facebook CEO Mark Zuckerberg at F8 (11:45). |

| Nov 2016 | The Finnish Academic Association for Mathematics and Natural Sciences (MAL) prize for the most distinguished Master's thesis. |

| Apr 2016 | Rabin Ezra Scholarship for doctoral students in computer graphics, imaging and vision. |

ACADEMIC SERVICE

| Area Chair / Papers Committee | CVPR (2026, 2025, 2024), SIGGRAPH (2023, 2022, session chair), EGSR (2022, 2021, session chair), HPG (2024, 2021) |

| Reviewer | SIGGRAPH, SIGGRAPH Asia, CVPR, ECCV, ICCV, TOG, TVCG, 3DV, Computers & Graphics, Eurographics, Pacific Graphics |

| Teaching Assistant | Computer Graphics at University College London (2014, 2015, 2017). |

JOBS

| Oct 2025 |

Meta, Senior Staff Research Scientist, London U.K. Working on Hyperscape. |

| Nov 2024 - Oct 2025 |

Google DeepMind, Staff Research Scientist, London U.K. Worked at the intersection of 3D representations and generative video. |

| May 2022 - Nov 2024 |

Google, Senior Research Scientist, London U.K. Worked on Fast and Large-Scale Neural Radiance Fields. |

| Nov 2020 - May 2022 |

Google, Research Scientist, London U.K. Worked on Fast or Large-Scale Neural Radiance Fields. |

| Nov 2019 - Nov 2020 |

Google, Research Scientist, Los Angeles USA. Worked on Immersive Light Field Video with a Layered Mesh Representation (SIGGRAPH 2020). |

| Jun 2017 - Aug 2018 |

Pro Unlimited, Contingent worker @ Facebook (Computational photography group), London U.K. Developed Instant 3D Photography (SIGGRAPH 2018). |

| Jun 2016 - Jun 2017 |

Facebook, PhD research intern (Computational photography group), Seattle USA. Developed Casual 3D Photography (SIGGRAPH Asia 2017). |

| Dec 2013 - May 2014 |

NVIDIA, research intern (NVIDIA research), Helsinki Finland. Developed Sequential Monte Carlo Instant Radiosity (I3D 2016, TVCG 2017). |

| Jan 2012 - Nov 2013 | NVIDIA, Systems software engineer (mobile browser team), Helsinki Finland. |

| May 2011 - Jan 2012 | NVIDIA, software engineering intern (Flash3D team), Helsinki Finland. |

EDUCATION

| Aug 2014 - Jul 2019 |

PhD in Computer Science (University College London) Viewpoint-Free Photography for Virtual Reality (discovery.ucl.ac.uk/id/eprint/10078087) Supervisors: Gabriel Brostow and Tobias Ritschel. |

| Jan 2012 - Jun 2015 |

Master's degree in Computer Science (University of Helsinki) Sequential Monte Carlo Instant Radiosity (helda.helsinki.fi/handle/10138/156669). |

| Sep 2009 - Jan 2012 |

Bachelors degree in Computer Science (University of Helsinki) Triangle based and voxel based rendering in real-time graphics (phogzone.com/bscthesis.pdf - in Swedish). |